Rationalisation vs cognitive laziness

In the New York Times, professors Gordon Pennycook and David Rand explain why we believe in fake news. In this debate, one group claims that our ability to reason is hijacked by our partisan convictions: that is, we’re prone to rationalization. The other group claims that the problem is that we often fail to exercise our critical faculties: that is, we’re mentally lazy. However, recent research suggests a silver lining to the dispute: Both camps appear to be capturing an aspect of the problem.

These findings might have real implications for public policy, as the research suggests that the solution to politically charged misinformation should involve devoting resources to the spread of accurate information and to training or encouraging people to think more critically.

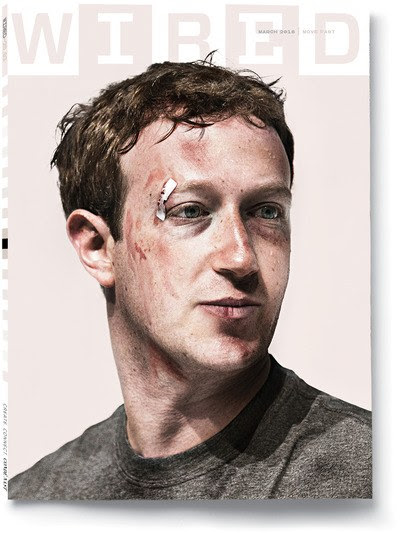

Chief executive of transparency

In a bold move to restore trust, CEOs of social platforms have entered into transparency mode.. In an op-ed titled “The facts about Facebook” in the Wall Street Journal, Mark Zuckerberg explains the company’s advertising strategy. In this article, he admits that the company’s data dealings can “feel opaque” and cause distrust. But he maintained that users are in control and that Facebook asks the user’s consent to access his data, as required by the GDPR.

In an extensive interview to RollingStone, Twitter CEO Jack Dorsey talks about him and the social media company. Asked about the amplification role that the company plays on the amplification of false narratives, he replies “The question we’re now asking ourselves is, if that is indeed misleading, how do we stop its spread? We can amplify the counter-narrative. We do have a curation team that looks to find balance. A lot of times when our president tweets, a Moment occurs, and we show completely different perspectives. So a lot of times, people don’t just see that tweet.”

Lobbying or regulation, that is the question

European Commission documents obtained through a freedom of information request by Corporate Europe Observatory, a lobbying watchdog, shed light on Facebook’s lobbying strategy towards European Institutions. Laura Kayali describes in Politico how Facebook tried to discourage regulation, and how the company’s views on regulation led to tension with the European Commission.

The misunderstanding certainly was that the approach toward regulation differs in the EU than the US, where the company has its headquarters. Yet, even the US regulator is considering fining Facebook for violating a legally binding agreement with the government to protect the privacy of personal data. In this context, US policymakers are considering a data privacy legislation.

Some other news:

- EU most hackable elections? Politico Laurens Cerulus points out the cybersecurity and disinformation risks ahead of May’s European elections, coming back to the history of European governments hacking.

- News-rating verification plug-in NewsGuard, a partner of Microsoft’s Edge browser warns readers of daily mail that “this website generally fails to maintain basic standards of accuracy and accountability”. The company conduct its ratings based on nine journalistic criteria and issue “Red” or “Green” credibility ratings, accompanied by detailed written “Nutrition Labels” for each website.

- How to use 4Chan to cover conspiracy theories: practical tips by Daniel Funke in Poynter

- Fake news in the fight against Ebola: rumours spread over the radio and Whatsapp critically hinders the work of health authorities and humanitarians’ organisations.

- How the #10yearchallenge sparked lots of fake and misleading images: the viral game has been an opportunity for several fake information to pup up.

Agenda and announcements

- 29 January @ Brussels: the European Commission will assess the first reports provided by the platforms signatories of the European Code of conduct on disinformation.

- 30 January – DisinfoLab Webinar: InVid video verification tool with Denis Teyssou from AFP

- February 01 @ Baltimore: Truth Decay: Deep Fakes and the Implications for Privacy, National Security… by University of Maryland Francis King Carey School of Law

HR corner

- IFTF Institute for the future is hiring a Lab Director to expand its Digital Intelligence Lab into a world-changing center for forward-looking research that examines how new technologies can be used both to benefit and challenge democracy and what we need to do to build a healthy and resilient digital society. If you meet these requirements, send resume, references, and a cover letter explaining why you are the right person for this job to Katie Fuller at kfuller@iftf.org

- First Draft is expanding its team in London and New-York. A dozen positions are now open.

- NewsGuard, a new service that fights misinformation by rating news and information websites for credibility and transparency, is preparing to expand to several European markets, including the United Kingdom, Germany, Italy, and France. NewsGuard is looking for trained journalists, experienced editors and fact-checkers interested in joining its European editorial teams. Ideal candidates will be able to write and speak both in English and in at least one language commonly spoken in the countries listed above. Interested applicants should send a resume and brief cover letter to NewsGuard’s Executive Editor, Eric Effron, at eric.effron@newsguardtech.com.

- BuzzFeed News is hiring two contractors to help produce debunking videos.